By Richard Broughton, MTM’s Brand and Strategy Director

What happens to social media when you cannot tell what’s real and what’s AI-generated? Sit back and find out, it’s happening as we speak.

Just a couple of years ago, when AI didn’t feel like an existential threat to almost every aspect of our world, my reaction to a video on my social feed was pretty simple. If it was funny or worth returning to, I’d like it. If it was helpful or a good resource, I might save it. If it was moving or charitable, I might watch it twice before carrying on. What I didn’t do was launch a light-touch investigation to determine whether it was real or not.

From passive scrolling to private investigations

Oh, how I yearn for those simpler times.

Today, I find myself ever suspicious, treating every Ring doorbell camera, prank, or courtroom video with scepticism and mistrust. Equally, as someone in their forties who works across social media, I feel like my ability to determine what’s real or fake is a marker, a barometer if you will, representing the speed of my descent into boomerism. If I can’t easily spot videos made with AI, what am I doing? So concerned have I become that I find myself constantly looking for the telltale signs.

I'm scanning for blurred watermarks and overly neat, clipped conversations. I’m looking for things that don’t quite stack up or don’t look quite right. I’m checking profiles and scrolling through the comment section. You could call it paranoia, but it’s really not. So much of my FYP is now AI-generated, and that’s just what I notice. So, my investigatory social sideline is now a habit, but one built out of necessity, because the platforms are filling up with content that looks plausible at a glance and clearly profitable enough for the platform to keep pushing it to my feed.

When content supply goes infinite

Meta is not exactly being coy about where it sees things heading. In September last year, it introduced “Vibes”, described as a way to “create and share short-form, AI-generated videos” inside the Meta apps. In February this year, it was reported that Meta is now testing a standalone Vibes app, effectively putting AI video and an AI video feed in its own dedicated product.

If you run a social platform, you can see the rationale. More content keeps people in the feed, and more time in the feed creates more opportunities for ad inventory. Generative tools are turning content supply into something closer to a production line.

The awkward bit, and where I keep getting hung up, is trust. Reuters Institute’s 2025 Digital News Report says 58% of respondents are concerned about being able to tell the difference between what’s true or false online. When the audience has that level of doubt, outcomes become less predictable, and media becomes harder for brands to price.

I am not saying that social media will disappear tomorrow. Meta, TikTok and YouTube have built platforms that are deeply habitual, and people rarely walk away from addictive habits overnight. There is a much bigger and darker conversation to be had about propaganda, misinformation and the role AI-generated content could play in shaping public discourse, but the issue I want to focus on here is commercial: what is social ad space worth to a brand if every impression comes with a side order of doubt and audiences feel the need to check whether what they are seeing is real?

Shit in, shit out.

A lot of debate about AI content gets stuck on quality. However, comments around ‘the hands look odd’ or ‘the physics are off’ or ‘the conversation is too neat’ are being heard less and less. Those issues have faded significantly compared to just six months ago, with new models and easier prompting reducing the number of improbable artefacts you see, and making fixing issues easier if they do occur. The more important change is economic: if generative creative becomes more reliable and consistent, it makes AI content production infinitely cheaper to create than human behaviour and interactions it imitates.

Kapwing’s research suggested that between 21% to 33% of YouTube’s feed may consist of low-quality AI or “brainrot” videos, and that a brand new account was served 104 “AI slop” videos in its first 500 Shorts. That is some nefarious platform logic in action. Recommendation systems are designed to serve up precisely what keeps you watching, and cheap synthetic content can be generated, tested, and iterated at a speed humans cannot match. If you are a marketer, it is worth being honest about what this does to the meaning of a view and the value of reach. As the volume of content explodes, and anyone can generate anything, getting seen stops being the primary challenge, and trust and credibility emerge as the key metric for success.

The authenticity premium

So where do we go from here? I think it’s likely that a premium will emerge for verifiably authentic content. Inventory (ad placements) that can demonstrate provenance, context, and real audiences should become more valuable.

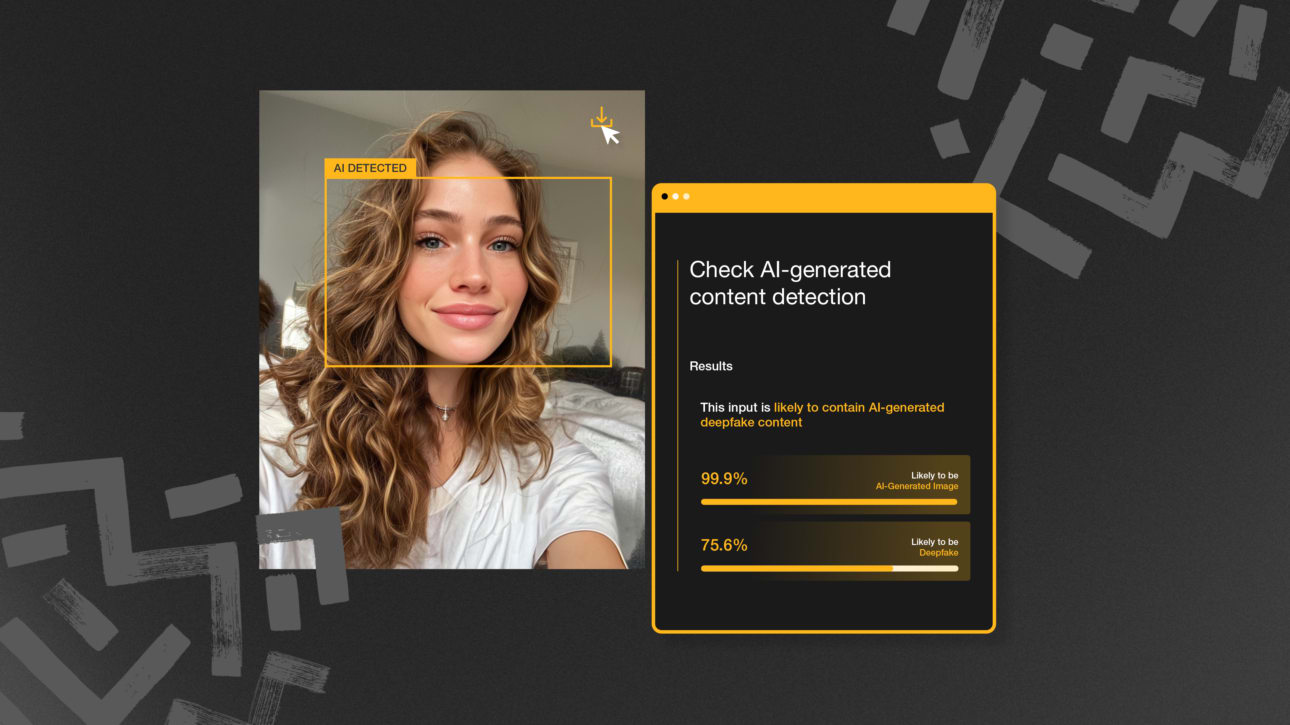

Before that happens, platforms will have to stabilise things and put the right infrastructure in place. We’re already seeing early versions of it: content credentials, AI labels, provenance metadata, creator verification, and tighter moderation policies around synthetic media. None of these moves solves the problem on its own, but they highlight that the topic is already being discussed and where the industry is heading.

At a practical level, it’s about managing uncertainty, so when your own eyes aren’t enough, you look for other proof: who produced it, how it was generated, and whether that process can be demonstrated.

If you are a platform or publisher, provenance becomes a feature you can charge for. If you are a brand, provenance becomes a buying signal in the same way that viewability, fraud controls, and brand safety became non-negotiable once the market matured.

CPMs come under pressure

The common assumption is that the threat is users leaving, and they might, eventually, in some segments. The more immediate pressure point is advertisers, because advertisers have alternatives, and they react quickly to uncertainty.

Social ad expenditure is huge. WARC’s “Big Picture: Social media 2025” forecast put global social ad spend at $286.2bn in 2025 and expected it to exceed $300bn in 2026. Those numbers will not evaporate, but how much of that spend stays at a premium price is a fair question.

Brand safety concerns are already there Integral Ad Science’s 2025 UK Industry Pulse report said 34% of advertisers expressed concern about ads appearing alongside risky content, and 31% specifically cited AI deepfakes. It also noted that 77% of media professionals see third-party measurement and optimisation as important to protect ads from being served alongside deepfakes.

Add the platform measurement gap, where advertisers rely heavily on platform-reported performance data, and you can see why buyers might want to start negotiating harder. DoubleVerify’s 2025 Global Insights report on “walled gardens” platforms such as Meta, Google, and TikTok (that control both the ad inventory and the measurement around it) highlights marketers’ growing concern about brand suitability and content adjacency as AI-generated material increases.

Put all that together, and you get a simple prediction. CPMs on anything-goes feeds will come under increasing pressure as brands refuse to pay top dollar to appear next to uncertainty, even if people keep scrolling.

Welcome to the comment section

One of the more revealing shifts is how often the comments section is now doing the job the content used to do. It’s no longer unusual to see the top comment under a viral post debating whether it’s AI-generated before anyone discusses what actually happens in the video.

The BBC’s Joe Tidy wrote about this earlier this year, describing how comment sections under viral AI posts are increasingly dominated by users calling out what they believe to be synthetic, sometimes generating more engagement than the original clip itself.

Academic research also backs up what many users are already feeling. A 2025 University of Exeter report described social media comments as “quick warning signals” that can help users spot misinformation, while also cautioning that misleading replies can undermine trust in accurate information. Effectively, comments can act as a valuable filter, but they can just as easily distort the truth.

Platforms have started to formalise this instinct, although not specifically because of AI. Community-led context systems, such as Community Notes on X, were introduced to address disputed or misleading information more broadly, but they have the same sort of effect. A University of Washington-led study found that posts with Community Notes attached were less likely to go viral, with reposts and likes falling after a note was added.

Verification by committee

Community Notes are not an AI solution. They are a response to contested information and the cesspit of lying incels and racists that seem to exclusively occupy X today. But they do illustrate something important. Once context is added to a post, the user’s behaviour changes and engagement shifts. The presence of an explicit challenge alters how the content travels and our enthusiasm for engaging with it.

The more relevant point isn’t whether the comments and notes always get it right. It’s that users have started to treat other users as part of the verification process. They are no longer relying solely on the content in front of them or the platform. Instead, they look sideways for confirmation, and that behavioural change could carry significant commercial weight.

If the audience routinely pauses to run a trust check before reacting, the feed becomes slower and more argumentative. Engagement still happens, but it happens differently, and the meaning of a like or a view shifts.

For brands, the issue isn’t that they are buying a specific piece of AI-generated content and inheriting its comment thread. It’s that they are buying into an environment where scrutiny has become more normal.

If audiences start to question everything they see, that mindset travels. It shapes how people process ads, as well as organic posts. A feed that feels argumentative or contested is a different commercial environment from one that feels straightforward.

Follow the money

Money has always followed certainty, so when an environment starts to feel unstable, spend tends to move towards channels and activities that can show something tangible about who’s watching, why they are there, and the context the ad appears in.

You can see it most in environments where the audience relationship is much easier to understand. Premium publishers with strong editorial identities, podcasts with loyal audiences, newsletters with direct subscriber relationships, and live formats such as conferences, events and even live streams all offer something the open feed will increasingly struggle with.

That matters more than it sounds. A newsletter subscriber, a podcast listener, or someone who has chosen to spend half a day at an event is giving you a much stronger signal than someone being served their fifteenth AI-generated video of the morning. You may not get the same reach, but you do get a far clearer read on intent, context and attention, and I think that will start to matter more as the feed gets noisier.

Retail media is the clearest large-scale example. It sits on transaction data and logged-in identity, so you can see who bought what and connect exposure to actual behaviour. WARC forecasts global retail media investment at $174.9bn in 2025, rising to $196.7bn in 2026. That sort of growth is not happening by accident. It reflects a market that is increasingly willing to pay for environments where audience signals can be shown rather than guessed at.

Connected TV is benefiting from similar dynamics. It is not immune to fraud or measurement gaps, nothing is, but the identity and distribution chain is still tighter than an open social feed being flooded by synthetic content designed to hold attention at any cost.

WPP Media’s “This Year Next Year” forecast in December 2025 put global ad revenue at $1.14 trillion in 2025, excluding US political spend, with further growth projected into 2026. In a market of that size, even relatively modest reallocations represent billions moving from one kind of media environment to another, which is why this stops being an abstract debate about AI and becomes a question about where budgets are safest.

Platforms respond

It would be naïve to think platforms will ignore these risks and potential threats. Their ability to charge the rates they do depends on maintaining enough confidence that brands will continue to pay for reach and exposure.

For Meta and the likes, I think the challenge is as much technical as it is commercial. Detecting synthetic content is not getting easier. NIST’s work on synthetic content risk highlights that provenance tracking and watermarking can help establish authenticity, but that detection methods and adversarial tactics continue to evolve. In other words, the race to the bottom isn’t slowing down.

The Verge also highlighted how fragile metadata-based labelling can be, particularly when provenance data is stripped as content moves between platforms or when labels are poorly delivered to users. A badge is only useful if it survives distribution and is visible at the point of consumption.

Regulation adds another layer. Article 50 of the EU AI Act introduces transparency obligations for AI-generated or AI-manipulated content, including deepfakes. That does not solve the trust problem, but it formalises responsibility and raises the compliance bar. I think there is a near future where all AI-generated content is legally required to include a disclaimer.

Some environments will lean into verified creators, verified capture processes and tighter measurement controls. Others will operate with looser standards and greater volatility. For brands, the implication is straightforward: inventory that can demonstrate its authenticity will command a premium. The rest will offer cheaper reach and more chaos.

Social was meant to be social

It’s worth remembering what social media was supposed to be.

At its simplest, it was about sharing what you were up to. Photos from the weekend. A new job. A joke. A view on something happening in the world. You followed friends, people you admired, or people whose lives you found interesting. You saw slices of reality, edited and curated, but for the most part, human.

Over time, that shifted. The feed moved from friends to creators, from creators to algorithms, and from algorithms to whatever keeps you watching. Even so, most people still use social platforms to track people, not just content. They follow individuals. They follow personalities. They follow people’s lives.

That’s why the current moment feels slightly off. If the feed becomes saturated with AI-generated clips designed purely to keep you on the teat or trigger a reaction, the centre of gravity moves even further away from real experiences. You can tolerate some of that. Everyone understands that not every video is a Channel 4 documentary. But if the balance tips too far towards AI clickbait, something has to break, doesn’t it? Surely the platforms start to feel less social and more like an entertainment engine, if we haven’t already crossed that Rubicon.

The question is whether people push back. Will we see a return to smaller, friend-led networks? Will new platforms emerge promising an AI-light or AI-transparent experience? We’ve seen versions of this before. BeReal briefly surged by offering unfiltered moments. Private group chats already function as a refuge from the noise of the public feed.

Or perhaps most people will simply accept the shift. If the content is entertaining enough, synthetic or not, the habit remains intact. Platforms are exceptionally good at sustaining habits after all.

The most likely outcome, in my view, is coexistence. A layer of social built around spectacle and generative novelty. A layer built around people you actually know. A layer where verification is critical because the stakes are higher.

For advertisers, that distinction also matters. The original promise of social media was proximity to real people. If that proximity becomes harder to feel, brands need to decide whether they are buying stimulation or actual connection.

Where will we end up?

I don’t think social media will implode because synthetic, AI-generated content floods the feed. These platforms still provide distraction, status signalling, and stimulation. Plenty of users will accept AI-generated content without complaint as long as it entertains them, but the economics of advertising are less forgiving.

Advertising depends on more than eyeballs. It relies on the space feeling stable enough for the message to land. So, when that stability weakens, advertisers will push back.

Placements that can show where they came from and who is actually watching will hold their value. Feeds that feel chaotic will still sell reach, just at a discount. And over time, I think that gap will become a chasm.

If you’re questioning the value of your social spend in an AI-heavy feed, it might be time to rethink the plan. Talk to our team about building a more trusted social presence.